15 Sep 2019

I completed my thru-hike of the Appalachian Trail one year ago today. Here’s a quick-and-dirty guide for how to do it fast.

Have a plan

The only way you’re going to hike over 2,190 miles in sixteen weeks is if you have an idea of how far you need to hike each day. This naturally manifests itself in the form of an itinerary, which lists out where you plan to stay each night. You might know David “AWOL” Miller from his best-selling trail guide, but few people are aware he has itineraries on his website for free. You can give my itinerary a try as well.

Some people will say having an itinerary takes away the magic of thru-hiking. These are the same people who will be eating your dust.

Pack light

The average thru-hikers takes between five and seven months to complete the A.T., with an average base weight of 19 pounds. If you want to go faster, you have to go lighter. Here’s an algorithm for cost-effectively getting a lighter pack:

- Weigh out everything you have in your pack, and find lighter alternatives on sites like OutdoorGearLab.

- Figure out how much weight you’d save if you bought the alternative, and divide it by price to get a savings-to-price ratio.

- Buy gear in descending order of the savings-to-price ratio.

- Stop when you don’t want to spend any more money.

My base weight was around 13.6 pounds. Get to a weight like that and you’re golden.

Be consistent

More than 80% of people who start an A.T. thru-hike don’t complete it. There are lots of reasons behind this: injury, improper budgeting, getting homesick. You can help yourself avoid some of these issues by being consistent. Learn the range for your pace and stick to it; set a budget and stick to it; make a plan for seeing friends and family and stick to it. If popular books are any indication, we’re just starting to realize how important habits are. By forming a good set of habits on the trail, knocking out miles will be the norm for you, not the exception.

Have a support system

If I can say one thing for certain, it’s that I wouldn’t have been able to complete the A.T. without my support system: my family. Here’s an incomplete list of what my family did to support me:

- My sister gave up her bed while I stayed at her home in Philadelphia.

- My brother drove 5 hours round-trip to drop off water purifier when I unexpectedly ran out.

- My parents drove [to North Carolina, New Jersey, New Hampshire, Maine] and took zero days with me.

- My brother let me stay at his place for a week while I recovered from illness.

Your support system needn’t be your family! It can be friends or relatives in various states, or even just some kind people in trail towns. Whoever it is, try and get help from them when you need it. You’ll be surprised how often people are willing to lend a hand.

Take help and be thankful

You may think that you’re completing the A.T. all by yourself, but the truth is you’ll be propelled to the terminus by a group of strangers.

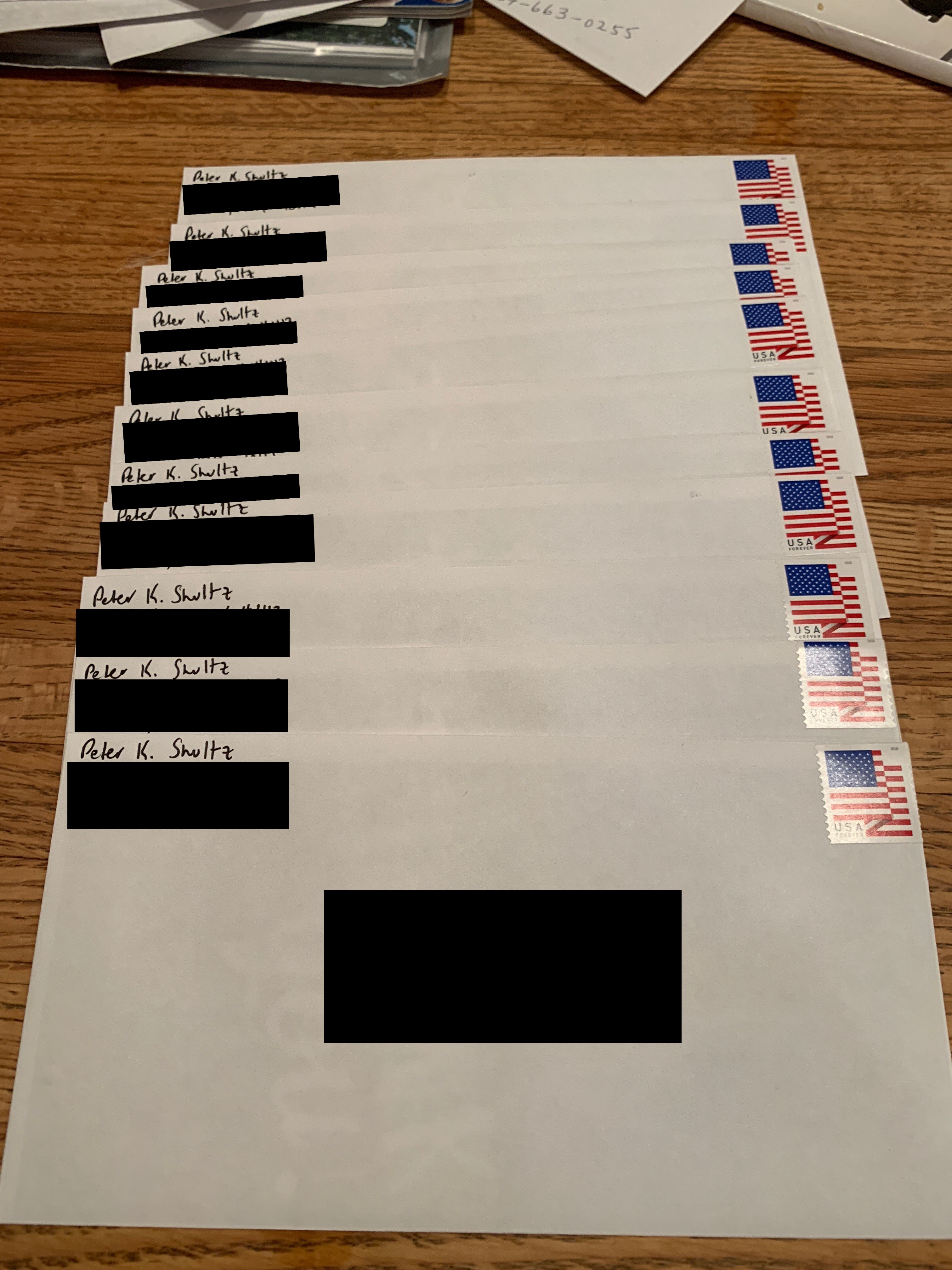

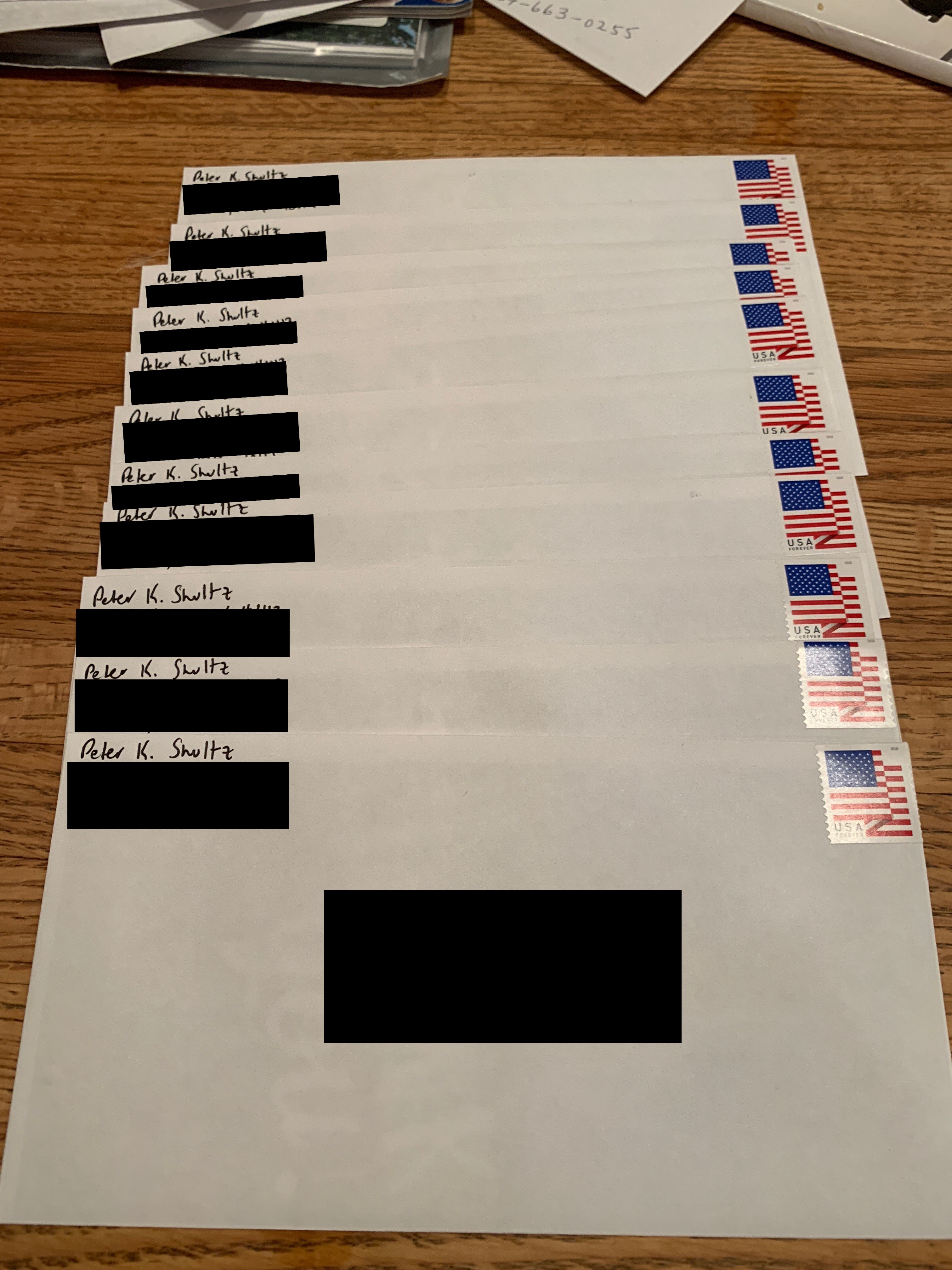

Whether it’s a family that buys you a meal after a bear eats your food, or a day hiker that gives you a Clif Bar, or a woman that drives you to a gas station to re-supply, there will be lots of people that show up for you as trail angels. I recommend taking down their name and address and sending them a thank-you note when you complete your thru-hike (the photo to the left is all the thank-you notes I wrote when I finished). Not only will it make them smile, but it will also motivate you to keep going when times are tough—after all, you don’t want to disappoint them.

That’s all I have for now. If you’re considering hiking the A.T. and want to talk through any plans, feel free to reach out. My email’s at the top of my CV.

22 Mar 2019

I started my job at Microsoft five months ago today. I had the opportunity to write a small post about what I do for Wes Weimer, my former software engineering professor. I thought I’d share some of it here.

Hi everyone! I’m a program manager (PM)1 at Microsoft, working on high-performance computing. Professor Weimer asked me to write about my job to give you some industry perspective on what you’re learning right now.

Large software companies have an interesting problem: they have a lot of work to do on each of their products. Whether that’s implementing a new feature, improving performance, or working with another team to create a product integration, there’s often so much work going on that it’s hard to prioritize what to do next. That’s where PMs come in!

For every 5 to 15 software engineers writing code to build or improve a product, there’s one program manager figuring out what those software engineers should be working on next. With that comes a whole other bunch of responsibilities: specifying the behavior of new features, working with partner teams who have a stake in decisions you make; representing your product in meetings with finance, marketing, and documentation folks. The list goes on and on.

Everbody has a different definition of what PMs do. Many people say a PM’s job is to be “the voice of the customer”, but that makes software engineers sound ignorant of the customer, which they’re not. Software engineers and PMs both have the needs of the customer in mind–it’s just that PMs have a better understanding of what customers need most because they’re busy prioritizing everything that needs to get done to improve the product.

At least at Microsoft, PMs don’t actually tell software engineers what to do. Rather, program managers persuade software engineering managers2 that certain things need to get done. If our arguments are persuasive, software engineering managers will allocate their software engineers to work on what we think matters most. Software engineering managers often call this “funding” a certain piece of work (i.e. “we’ll fund that feature you seem to care about so much”).

From the perspective of a PM, slide 6 of the “Requirements and Specifications” lecture really drives home an important point: if a mistake happens and it’s not discovered until later, it becomes very expensive to fix. So expensive, in fact, that you won’t be able to deliver on all the other features you promised (and who likes breaking a promise?). As a result, priorities need to be reset, and somebody–you, a friend, that team you were working with–is going to be unhappy.

That’s why PMs are often assigned to write specs–a document that describes [in painstaking detail] the requirements for how a new feature or integration is going to work (see slide 33 of the “Elicitation, Validation and Risk” lecture). There’s no doubt that software engineers could write specs if they wanted to–it isn’t too hard. But why burden them with more work when they could be coding?3

Feel free to ask any questions in the follow-up section. I’ll answer as best as I can.

1 Depending on where you work, PM can mean program manager, product manager, or project manager. Each of those job titles means something different depending on who you ask. ↩

2 If you become a software engineer, your boss is a software engineering manager. At Microsoft, software engineering managers assign programming tasks (“work items”) to the engineers that report to them (their “direct reports” or “directs”). They’re often responsible for leading design and [re-]architecture efforts as well. ↩

3 Probably the easiest way to describe what a PM does is “anything that would get in the way of a software engineer from checking in code”. ↩

12 May 2018

I’ve talked about the concept of flow here before. As I focus on writing and meditating more, I’m going to start keeping track of conditions when I experience flow.

The idea is that if I can find similarities in the conditions before, during, and after flow, I can try to reliably reproduce the mental state. Things I’ll track include:

- Date

- Time

- Weather

- Mental state

- Duration of flow

- Activities preceding flow

- Activities completed during flow

- Activity when flow ended

- Substance of last meal

- Hours since last meal

- Hours of sleep the previous evening

This project will be similar to a decision journal, which I first learned about over at Farnam Street. You’ll note that I borrowed some of the entry items from their template.

If you like the idea, try it out. Email me your thoughts.

26 Nov 2017

Proprietary trading is all about having an edge–firms can’t make a dime unless they know something no one else does (or, for the more skeptical, unless they guess at something correctly that nobody else guesses at correctly).

As director of sponsorship for a large hackathon, I read a lot of recruitment pages to understand if a company would agree to a sponsorship deal. A lot of trading firms all recruit from the same places: a few of the Ivy Leagues, MIT, and the University of Chicago. A handful of Chicago firms recruit at Michigan and UIUC as well.

There might not be a problem with that educational homogeneity: companies are better when smart people work for them; trading is no exception. Since smart people go to shiny schools, you get the most bang for your recruiting buck by going to shiny schools exclusively.

But for an industry where edge determines whether you eat or starve, wouldn’t you expect there to be a little more educational diversity (or any diversity at all)? I’d posit that smart students from universities with less name-recognition think differently than those at universities with more, by nature of the fact that they didn’t go to the same school. You might say that high-caliber universities create diverse student bodies–and therefore diverse ways of thinking–but recent data suggests that not to be the case. Racial diversity has been dwindling at elite schools over the past three decades.

I see diversity in educational training as an advantage that translates directly to alpha generation. McKinsey research has suggested this same idea for years: diversity helps a company’s bottom line, period. I wager that trading is no different.

These are just my musings. But if I ever start a proprietary trading firm, don’t expect me to be recruiting solely from two schools in Cambridge.

Thanks to Kellie Spahr for reading a draft of this post.

05 Aug 2017

I recently read an interview with Gary Marcus, a professor of psychology and neural science at NYU. In the interview, he questions whether the big data approach to AI–that of training neural networks on terabyte-scale datasets–is successfully moving humanity towards the intelligent, sentient computers that we all dream of.

Roughly 1.¯3 years have passed since that interview, and Marcus has now resolved his own question with a piece in the New York Times: “trendy” neural networks are not moving us in the right direction. He says this is because they lack basic concepts of the world that humans seem to learn by osmosis, like the natural laws of physics or linguistics.

I’d argue Marcus is jumping ahead a bit–nobody is saying that neural networks are the only key to AI’s future. I imagine an AI system in the future will encompass multiple systems, much like the human brain: a neural network for pattern recognition, a memory store for recalling things it has learned in the past, a physics simulator, a sentiment analyzer for understanding emotion. Most of these things already exist, and perform pretty well independently. The exercise now becomes piecing all of them together.

One other point I think Marcus fails to address is that of quantum computing. While it’s correct to say that our current AI methods are held back by computational resources, it’s incorrect to say that there’s no solution to that on the horizon. Ask anyone who’s working on quantum right now and they’ll say that the technology will transform the way we live within our lifetime. I think the computational limitations Marcus alludes to will be lifted by quantum computers, and conversations I’ve had with quantum researchers suggest the same thing.

Large scale collaborations are all well and good. But I think preparing AI research for the advent of practical quantum computers is the most important next step for the discipline.